In this blog post, I want to chronicle my first naive efforts at implementing deep learning. The project never worked but I learnt quite a lot about the process along the way and I think it might be useful for people who are starting out.

After completing the machine learning course of Stanford University I was keen to try my new skill set on some problems. “How about I try to predict share price movement?”, I thought. It seems like a complicated problem but there are numerous examples of it working well enough in the literature and maybe I would be able to earn a few bucks on the side. I chose a neural network architecture for no reason other than they interest me the most. I thought that a regression problem might be too difficult to predict the future value of shares so I decided to make it into a classification problem that would answer the question: will share price increase tomorrow? The project was codenamed ShareBrain.

Scraping the data and preparing the training examples

I used python as the scripting language and found a yahoo-finance package that would download historical data for a given share. The package returned the data in a dictionary list. The below code is my implementation of scraping the data and then saving it in a file locally. If this file already existed then it would be loaded to save downloading it all again.

import sys

import numpy as np

from yahoo_finance import Share #The yahoo-finance package is used to gather the share data

# Srape historical share data

# Inputs:

# - share_name as given by yahoo finance

# - start_date and end_date as yyyy-mm-dd for the range of historical data to find

# - use_existing_data will try and use pre-fetched and stored data if True

# Returns:

# - historical_data in list form ordered oldest to newest

def get_share_data(

share_name='ANZ.AX',

start_date='2005-01-01',

end_date='2016-01-01',

use_existing_data=True):

share_filename = 'Data/' + share_name + '_' + start_date + '_' + end_date +'.npy'

if use_existing_data:

try:

historical_data = np.load(share_filename).tolist()

print("Data successfully loaded from locally stored file")

except:

#Scrape the data for the given settings and exit if there is an error

print("Attempting to scrape data for", share_name)

try:

historical_data = Share(share_name).get_historical(start_date, end_date)

np.save(share_filename, historical_data)

except:

print("Error in scraping share data. Share name is probably incorrect or Yahoo Finance is down.")

quit()

print("Scrape succesful")

else:

print("Attempting to scrape data for", share_name)

try:

historical_data = Share(share_name).get_historical(start_date, end_date)

np.save(share_filename, historical_data)

except:

print("Error in scraping share data. Share name is probably incorrect or Yahoo Finance is down.")

quit()

print("Scrape succesful")

# Reverse the order of the historical data so the list starts at start_date

historical_data.reverse()

return(historical_data)

The values that I wanted from the historical data were the opening, closing, high and low prices, as well as the volume of shares traded. Now to form the training data for the neural network I grouped together these data points for a number of days. The default value was for 30 days worth of data to be concatenated in an overlapped fashion (i.e. day 1 to day 30, then day 2 to day 31, then day 3 to day 32, etc.) The classifier was then set up to give a label of 1 if the next day’s closing share price was greater than the closing share price of the last day in the training input, otherwise the example was labelled as 0. The below code performs these steps and returns a numpy array with training examples set up in each row.

# Process the historical share data with boolean training target

# Inputs

# - historical_data as returned from get_share_data

# - days_of_data is the number of consecutive days to be converted into inputs

# Returns

# - training_input array with the share's volume, high, low, open, and close price for

# the number of days specified in days_of_data between the start_date and end_date.

# - training_target array consist of a boolean value indicating if the closing price

# tomorrow is greater than the closing price today.

def proc_share_bool_target(

historical_data,

days_of_data=30):

# Process the returned data into 3 lists of: open_price, close_price, and volume

try:

open_price = [float(historical_data[i]['Open']) for i in range(0,len(historical_data)) ]

close_price = [float(historical_data[i]['Close']) for i in range(0,len(historical_data)) ]

volume = [float(historical_data[i]['Volume']) for i in range(0,len(historical_data)) ]

high_price = [float(historical_data[i]['High']) for i in range(0,len(historical_data)) ]

low_price = [float(historical_data[i]['Low']) for i in range(0,len(historical_data)) ]

except ValueError:

print("Error in processing share data.")

quit()

# Take the historical data and form a training set for the neural net.

# Each training example is built from a range of days and contains:

# the open and close share price on each day in the range.

# The output is boolean indicating if the close price tomorrow is greater than today.

training_input = np.array([])

training_target = np.array([])

training_example_number = len(open_price) - days_of_data

for i in range(0, training_example_number):

training_input = np.append(training_input, volume[i:i+days_of_data])

training_input = np.append(training_input, high_price[i:i+days_of_data])

training_input = np.append(training_input, low_price[i:i+days_of_data])

training_input = np.append(training_input, open_price[i:i+days_of_data])

training_input = np.append(training_input, close_price[i:i+days_of_data])

training_target = np.append(training_target, close_price[i+days_of_data] > close_price[i+days_of_data-1] )

# The above for loop makes 1-dim arrays with the values in them. Need to use reshape

# on training input to make it 2-dim. Number of columns is 3*days_of_data to account for

# open price, close price and volume. The -1 in reshape will be filled

# automatically and (days_of_data +1) is the number of columns for each input. Likewise

# for the target array.

training_input = np.reshape(training_input, (-1, 5*days_of_data))

training_target = np.reshape(training_target, (-1,))

return (training_input, training_target)

Implementing the neural network

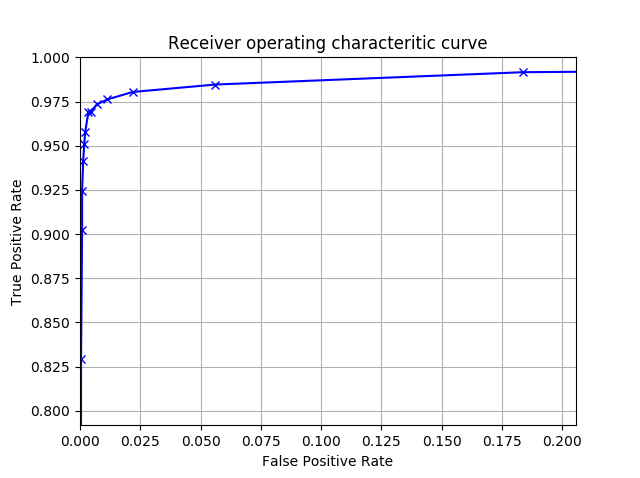

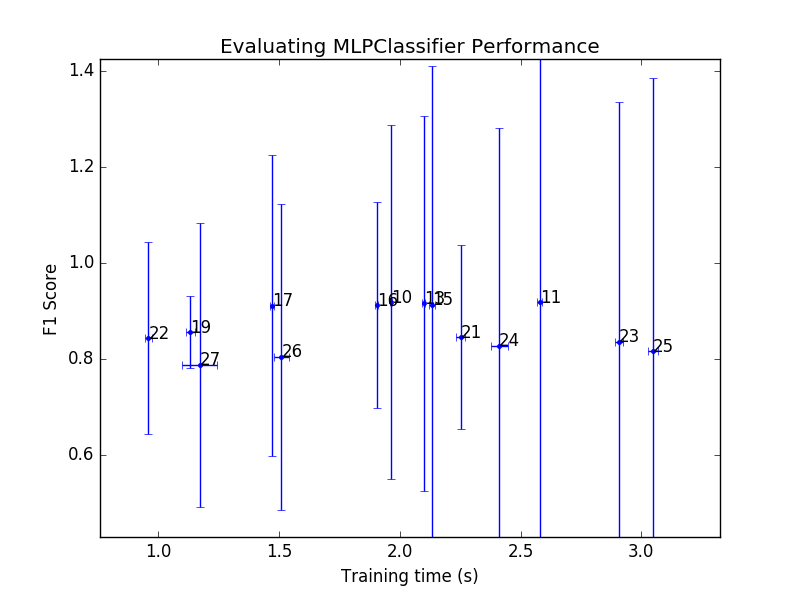

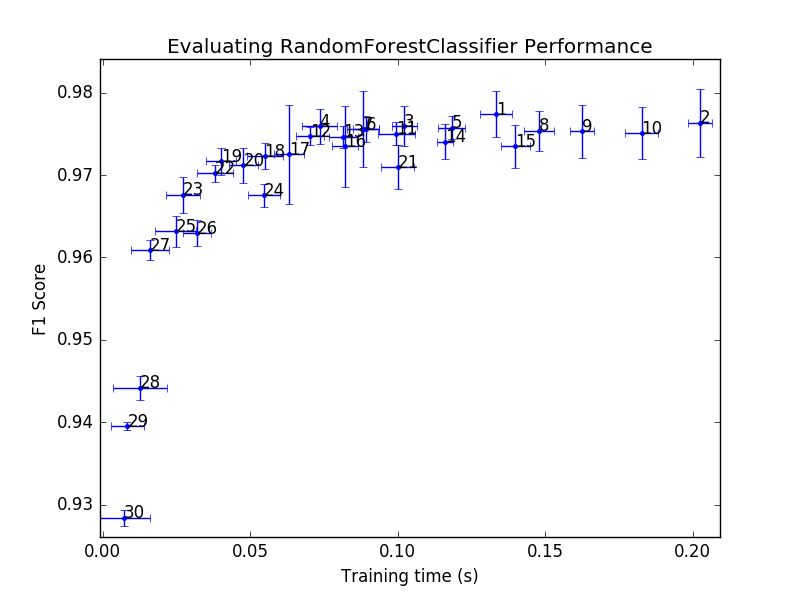

I used scikit-learn to perform the neural network training and my plan was to test different levels of complexity by varying the number of hidden layers and neurons. The standardscaler provided in this library also was useful in normalising the columns of the training array to have a range from -1 to +1. The training step was placed in a loop that would retrain the algorithm until a certain accuracy was attained (it is set to 10% below for reasons that will become clear soon).

# Common libraries

import sys

import numpy as np

import matplotlib.pyplot as plt

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.neural_network import MLPClassifier

# My libraries

import sharescraper

# Boolean prediction of whether the close_price tomorrow will be greater than today.

# Get training input and targets from sharescraper

historical_data = sharescraper.get_share_data(

share_name='ANZ.AX',

start_date='1920-01-01',

end_date='2016-01-01',

use_existing_data=True)

(price_input, boolean_target) = sharescraper.proc_share_bool_target(

historical_data,

days_of_data=10)

# Separate data into training set and test set

random_number = 0

test_split = 0.3

X_train, X_test, y_train, y_test = train_test_split(

price_input, boolean_target, test_size=test_split, random_state=random_number)

# Feature scale the training data and apply the scaling to the training and test datasets.

scaler = StandardScaler()

scaler.fit(X_train)

X_train = scaler.transform(X_train)

X_test = scaler.transform(X_test)

# Set up the MLPClassifier

clf = MLPClassifier(

activation = 'tanh',

learning_rate = 'adaptive',

solver ='adam',

hidden_layer_sizes=(10),

alpha = 0.01,

max_iter = 10000,

tol = 1E-8,

warm_start = False,

verbose = True )

# Train the network on the dataset until the test_accuracy raches a threshold

accuracy_check = True

while accuracy_check:

# Train the neural network on the dataset

clf.fit(X_train, y_train)

# Use the cross validation set to calculate the accuracy of the network

test_accuracy = clf.score(X_test, y_test)

train_accuracy = clf.score(X_train, y_train)

print("The network fitted the test data {:.3f}% and the training data {:.3f}%."

.format(test_accuracy*100, train_accuracy*100))

accuracy_check = test_accuracy < 0.1

The mistake

When I first ran my training code I was pleasantly surprised to see that there was an accuracy of just of 60%. “Huh, that seemed to work,” I naively thought. Dollar signs began spinning in my mind as even a slight advantage over chance (accuracy of 50%) on the stock market can snowball into large gains if properly managed. There were a few minor errors here and there which I fixed up quickly – mainly to do with some data missing from the training array as I hadn’t implemented the date range correctly.

After fixing up these small errors and thoroughly debugging the code (or so I thought), I retrained the network and this time had an accuracy of over 80%, 80%! “Wow, these neural networks really work great and I haven’t even had to do very much.” “Why isn’t everyone using this method as it is proving super effective?” I thought to myself. I was expecting a small increase over chance, not something as high as 80%! With the little effort I had put into optimising the algorithm’s hyperparameters, this seemed remarkable. Too remarkable.

I started to toy with the hidden layers and number of neurons. The initial implementation had something like 3 layers with 100 neurons in each. So I cut that down to 2 layers with only 10 neurons in each and retrained the data. 80% accuracy. Removing more neurons and layers down to 1 layer with 5 neurons. 80% accuracy. 1 layer with 1 neuron. 80% accuracy. “Uh oh, this doesn’t seem right at all.” Could it be that the intricacies of the share market could be predicted by something simpler than a fruit fly? It certainly didn’t seem so.

This lead me on a big debugging round. What could possibly be causing my incredibly high training accuracy? It wasn’t overfitting or anything to do with regularisation. In fact changing this parameter and many others had barely any effect on the accuracy. It seemed that 80% was always attainable. I then shifted my focus to the data scraping and the mistake revealed itself. I had assumed that the data was ordered from oldest to newest in the returned dictionary list but it was actually the opposite.

Being outsmarted by a neural network

So the historical data was backwards when being used in my training set. But how would that impact the training accuracy? I first thought that it would still be the same problem just a little bit backwards with the network predicting what happened before the training data as opposed to afterwards. Surely this couldn’t be responsible. However on further inspection it makes perfect sense.

I am using the opening (O) and closing (C) price for each day and the classification is based on whether the closing price for the next day outside of the range is higher or lower than the closing price of the last day in the range. What this looks like is below.

<---------------Days in training data range---------------> <-Future

O C | O C | O C | ... | O C | O C | O C | O C

^Compares^

However, this is not what I was doing as I had the dates backwards. Below is more representative of the training data and comparison that I was making.

Past-> <---------------Days in training data range--------------->

O C | O C | O C | O C | ... | O C | O C | O C

^Compares^

This is where the problem lies. In the training data the closing share price and the opening share price of the next day are the same 60% of the time. This is due to the valuation not changing when the stock market is closed from one day to the next. So all the neural network had to do was compare the opening and closing price for the one day in the range and it would be able to correctly predict the movement of the share price. The remaining 40% of the data is just being guessed with an accuracy of 50% so this adds another 20% to the accuracy which gives an overall accuracy of 80%. Mystery solved.

Moving forward

After this major problem had been solved, the accuracy of the classifier algorithm dropped back down to a more sensical 50%. I wasn’t really able to increase this any further by changing the architecture of the hidden layers and neurons. I considered adding some more contextual information in the form of scraping news websites and twitter feeds to capture community sentiment but my passion for the project had steadily declined. This was my first project and I wanted to do something that had more than a fighting chance of working. Maybe I will return to it one day and post any updates on this blog. At least I learnt a lot in how to tell if neural networks are operating correctly or whether they are just smarter than the user programming them!

The code for this project is available on my github.